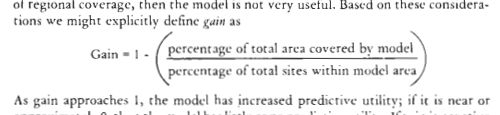

In the past few weeks I was involved in two discussions with archaeological colleagues on topics specific to the process and issues associated with Archaeological Predictive Modeling (APM). One conversation was via twitter with Dr. Colin Wren of the University of Colorado and the other with PhD in training Claire Boardman. The two conversations covered different aspects of the APM topic, but each seemed complimentary to the other. I am posting excerpts from these discussions here for 1) posterity and 2) because I think they are really interesting questions which rarely get much attention outside of peer-to-peer conversations. Also, truth be told, locational modeling of archaeology sites is still at the toddler stage (at best) in archaeology and simple questions like these are very valid discussion points. Anyway, perhaps you will find this interesting if you don’t know much about APM, you may find it useful if looking for a basis to instruct from, or useful is you want to frame your own thoughts and build from there.

The discussion with Wren was more specific to sort of grand challenges to APM; the why and how of a variety of approaches. Colin had some great probing questions about theoretical issues, but I think I only answered from the methodical perspective. I am often stuck there because I think we need applicable methods to explore the consequences of our data and theories before we know what is a useful approach and what is not. I present the brief summary of what Colin and I discussed. The conversation with Boardman was more about how APM is used in an applied setting; specifically Cultural Resources Management (CRM). My answers to the questions she posed are pretty generalized and could be further sharpened with a more in-depth discussion.

Wren Discussion Summary

Big issues: representing uncertainty, overcoming poor samples, adjusting for sample and variable correlations, establishing model thresholds based on model purpose.

Solutions? developing a framework of model techniques/approaches that serve specific purposes/conditions (e.g. what works, what doesn’t and why), common data sets to serve as benchmarks so new methods are comparable, convince other archaeologists this is a worthwhile thing to teach their students!

Some background on the “Issues” from the discussion:

- Uncertainty – documenting, propagating, visualizing uncertainty all around our models. starts with acknowledging and accepting uncertainty, then to modeling it (bootstrapping, Credible Intervals, Bayesian approaches, modeling different scenarios, etc…). Visualizing via multiple maps, varying thresholds, bivariate color legends…

- Poor Samples & correlation sample location bias, non-systematic sampling, imbalanced samples are all pretty serious issues. How to fix??? Let me know if you find out. Some components may include random sampling, explicit spatial variance/covariance, rebalancing, over/under sampling, SMOTE, hierarchical models, and what I am currently exploring, distribution regression [good explanation (PDF)].

- Thresholds – I think this is the single most overlooked part of current modeling practices. Anytime we classify into present/absent or high/medium/low we need to draw lines in the continuous distribution of probabilities/potential/sensitivity. THis should be done very cautiously and with respect to the weight/risk assigned to false-positives vs. false-negatives.

Boardman Q&A

Development projects:

What is the main reason for creating predictive models?

Assuming we are considering a “predictive model” to be the forecasting of archaeological site locations based on correlated co-variates (environmental or otherwise), the main reason are to scope the field effort and help in budgeting. They allow us to estimate the number of holes we will have to do and where we will likely target. They also give us a visual vehicle to share with the State Historic Preservation Office (SHPO) that we have considered sensitivity and what we think about it across the project area. On a larger scale, they can be used to estimate potential impacts of various project alternatives. In this sense, the % impact of site-likely area (or whatever the loss metric you chose) can be compared relatively between alternatives. Models can also be made for research purposes. As a model that seeks to understand the inference of model parameters will also generate predictions and is typically validated by them. There are plenty of other reasons that one may want to generate predictions of a models that deal with site locations, but these are the most common.

What project types/locations would you normally create a predictive model for?

For almost any project, but they are more effective on larger and linear projects like pipelines and highways. The Pennsylvania model I referenced above is now serving as a base model for any work done in that state. Firms are free to make their own for a project, but need to reference it to the state model and compare findings.

At what stage in the development planning approval process?

Sometimes as early as the proposal stage; to get a sense of cost. Otherwise the “Phase IA” stage, which is the initial assessment of sensitivity and potential project impacts prior to doing field work.

When you use predictive modelling:

Do you use a standard model design or create a bespoke model for each project?

A little of both. There are a standard set of parameters we use for the very basic weighted overlay model, but they can be adjusted based on physiographic setting or prior knowledge. If there are a number of known sites available to fit better parameters to, we do that. Overall, the design of the model is based on the purpose. If the purpose is to generate a model that can be easily discussed with the SHPO or Client, has variables that all archaeologists will be familiar with, and has easily discussed assumptions, the simple weighted overlay is the most common. If in addition to these goals, I want to get a better understanding of site distribution in the study area and the marginal effect of variables on site locations, I may make an additional model via logistic regression, random forests, or random effects model depending on my goals. If a Principal Investigator has a certain research question or prior information on the area, we can make a model that is based on these ideas as well.

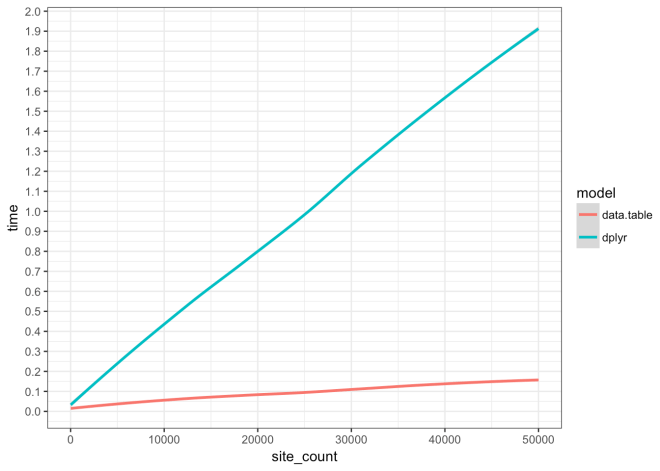

Do you create your models using a GIS application and if so, which one?

A lot of it is done in ArcGIS, but also a lot is done in the statistical programming language R. R is essentially a GIS, as well as a full-fledged programing language. We use QGIS at times as well. In ArcGIS the raster calculator and other raster tools within the Model Builder framework are helpful, but it can be buggy, slow, and is not very open or reproducible. R has a steeper learning curve, but offers nearly limitless ability to develop, visualize, and understand your models. QGIS is a great alternative (and sometimes go-to) to ArcGIS; it is fully capable.

On average how many models do you create in a year?

Speaking strictly of sensitivity model for archaeological locational data (aka “predictive model”), perhaps a dozen final models, but dozens of sub-models and candidate models. The year and a half of building the PA model was thousands or tens of thousands of models. However, we also create many archaeological models that do not serve the basic “predictive model” goals.

In your predictive models:

What datasets do you commonly use in your models?

1/3rd arc second (~10-meter) DEM, National Hydrologic Dataset (NHD), National Wetland Inventory (NWI), surficial and bedrock geology, many derivations of these base variables (e.g. variation in slope over a 3km neighborhood, cost-distance, or elevation below spring head), and known archaeological site locations. Viewshed, cost-paths, proximity to cultural locations are also possible choices. Things that are often avoided are elevation, density of known sites, and proximity to known sites unless they are explicitly required for your purpose.

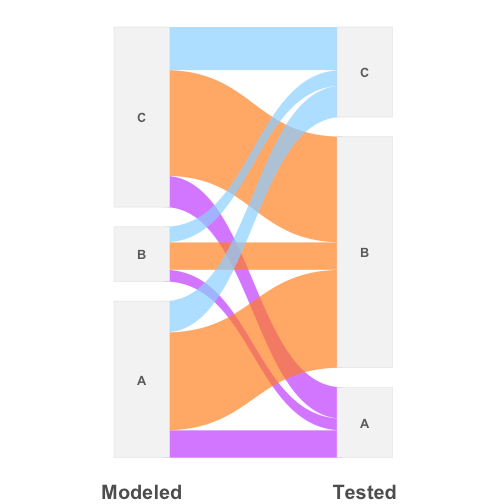

What method(s) do you use to validate your model?

Entirely depends on the model. Simple weighted overlay models with no known sites, validation is based on the archaeologists intuition. For weighted models with sites available, the presence/absence of sites is the validation, For statistical models (regression, random forest, GLMM, etc…) the models have metrics such as AIC, BIC, R^2, sensitivity/specificity, AUC, and any number of other things. A test set can be held-out to test on “independent” data that was not used in the model building, or better yet, K-Folds Cross-Validation is used to create many hold out samples. The point of validation is not simply to put a number to accuracy, but to balance accuracy vs. precision and bias vs. variance.

What level of accuracy/precision do you expect/accept?

Completely depends on the purpose of the model as any model is capable of 100% accuracy if the entire study area is considered site-likely or 0% accuracy if it is entirely site-unlikely, the answer is somewhere in between. [The basis of every model should be the purpose that it is intended, then all modeling methods grow from there. However, the most common approach in archaeology is to build a model, then find a purpose for it.] If it were a model designed to find the most highly sensitive locations because you wanted to find a specific type of site for testing, the overall accuracy may be low, but with high precision. As you are targeting a part of the distribution, but don’t care if you missed some outliers. If your model is to broadly protect all potential site likely areas regardless of survey time or funding, you may choose high accuracy and low precision; as you don’t mind a model that considers vast areas as site-likely as long as 100% of sites are accounted for. (I write about this topic a bunch in the 7 volumes of the PA model report).

Far to often, people just make a general model, then apply ad-hoc threshold that achieve an accuracy/precision that “looks good”, and then present it as capable of fitting every purpose. In actuality it is generalized out of fitting any purpose. The key is to balance accuracy/precision on top of bias and variance. A model with great looking accuracy/precision balance (even considering model purpose) can be resulting from an over-fit model with high variance. So the model looks great on the known data, but does not generalize well to new data. On the other hand, a model with pretty bad apparent accuracy/precision balance can be built on a model that is balanced bias and variance, so it generalizes to new data much better than the over-fit model that had much better looking accuracy/precision. In the end, you hope to maximize the accuracy that is possible given your dataset, variables, signal to noise ratio, and model (assumptions), while minimizing your risk of overfitting relative to the desired purpose of the model.

It’s turtles all the way down… For the PA model sensitivity class boundary thresholds for individual models are optimized for no less than 85% of known site-present cells (10×10-meter) where correctly included within the combined high and moderate sensitivity area that covered that covered no less than 33% of the study area. This equates to a target specificity of 0.85 and sensitivity of 0.67. The fact that is it “no less than 33%” is because we need to leave plenty of room for false-positives as they are the locations where we hope to look for sites (they are false positives until a site is found then they are true-positives). Maximizing specificity (site’s correctly identified) is great, but maximizing sensitivity (the area the model considers site-unlikely) is dangerous because it will lead to picking an over-fit model; bad news. It is good to pick a target sensitivity based on the projects goals.

*I used the term accuracy here as a stand-in for any measure of model correctness, but in archaeological modeling where the site-present and background samples are frequently severely imbalanced, the use of accuracy (the percent of observation correctly classified) will be very misleading. This is because the larger number of correctly identified site-unlikely background observations will swamp the fewer incorrect site-likely observations and suggest a very high accuracy when the class we care about (site-likely) may have a very low accuracy. A metric that weights for class is preferable.

Do you ever use predictive modelling for non-commercial / research projects?

All the time. It is my main area of study for the past 20 years. My blog has some examples