Reproducible research… so hot right now. This post is about a way to use “Containers” to conduct analysis in a more reproducible way. If you are familiar with Docker containers and reproducibility, you can probably skip most of this and check out the liftr code snippets towards the end. If you are like, “what the heck is this container business?”, then I hope that this post gives you a bit of insight on how to turn basic R scripts and Rmarkdown into fully reproducible computing environments that anyone can run. The process is based on the Docker container platform and the liftr R package. So if you want to hear more about using liftr for reproducible analysis in R, please continue!

- Concept – Learn to create and share a

Dockerfilewith your code and data to more faithfully and accurately reproduce analysis. - User Level – Beginner to intermediate level R user; some knowledge of Rmarkdown and the concept of reproducibility

- Requirements – Recent versions of R and RStudio; Docker; Internet connection

- Tricky Parts

- Installing Docker on your computer (was only 3 clicks to install on my Mac Book)

- Documenting software setup in YAML-like metadata (A few extra lines of code)

- Conceptualizing containers and how they fit with a traditional analysis workflow (more on that below)

- Pay Off

- A level-up in your ability to reproduce your own and others code and analysis

- Better control over what your software and analysis is doing

- Confidence that your analytic results are not due to some bug in your computer/software setup

More than just sharing code…

So you do your analysis in R (that is awesome!) and you think reproducibility is cool. You share code, collaborate, and maybe use Git Hub occasionally, but the phrase ‘containerization of computational environments’ doesn’t really resonate. Have no fear, you are not alone! Sharing your code and data is a great way to take advantage of the benefits of reproducible research and well documented in a variety of sources. Marwick 2016 and Marwick and Jacobs 2017 as leading examples. However, limiting ourselves to thinking of reproducibility as only involving code and data ignores the third and equally crucial component; the environment.

Simply, the environment of the analysis includes the computer’s software, settings, and configuration on which it was run. For example, if I run an analysis on my Mac with OSX version something, and Rstudio version 1.1.383, a few packages, and program XYZ installed it may work perfectly in that computational environment. If I then share the code with a co-worker and they try to run it on a computer with Windows 10, Rstudio 0.99b, and clang4, but not rstan and liblvm203a the code will fail with a variety of hard to decipher error messages. The process is decidedly not reproducible. Code and data are in place, but the changes to the computational environment thwart attempts are reproducing the analysis.

At this point, it may be tempting to step back and claim that it is user #2’s prerogative to make an environment that will run the code; especially if you won’t have to help them fix this issue. However, we can address this problem in straightforward way, uphold the ethos of reproducibility, and make life less miserable for our future selves. This is where Docker and containers come into play.

So what is a container?

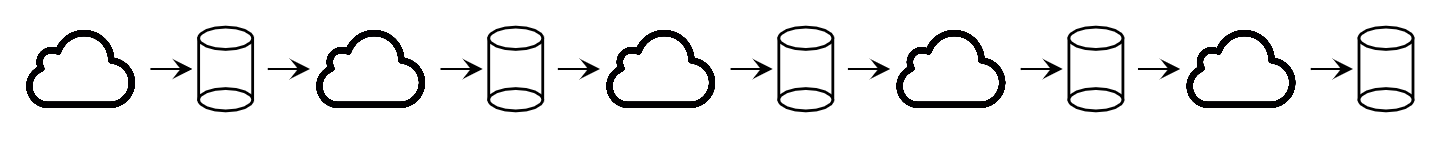

Broadly speaking, a container is a way to put the software & system details of on one computer into a set of instructions that can be recreated on another computer; without changing the second computers underlying software & system. This is the same broad concept of Virtual machines, virtual environments, and containerization. Simply, I can make your computer run just like my computer. This magic is achieved by running the Docker platform on your computer and using a Dockerfile to record the instructions for building a specific computational environment.

There is a ton about this that can be super complex and float well-above ones head. Fortunately, we don’t need to know all the details to use this technology! The name “container” is a pretty straight ahead metaphor for what this technology does. Specifically a shipping container is a metal box of a standard size that stacks up on any ship and moves to any port regardless of what it carries and what language is spoken. It is a standardized unit of commerce. In that sense, the Docker container is a standardized shipping box for a computer environment that fits in a single file, ships to any computer, and contains any odd assortment of software provided it is specified in the Dockerfile. To this end, the idea is that you can pack the relevant specifics of your computer, software, versions, packages, etc… into a container and ship it with your code and data allowing an end user to recreate your computing environment and run the analysis. Reproducibility at its finest!

Continue reading “A More Reproducible Research with the liftr Package for R”